Article by: Suresh Andani

7 Mei 2026 – As organizations adopt AI, many discover that their infrastructure struggles to keep up. Running AI in the cloud is an option, but the cloud can introduce privacy concerns and unpredictable costs. Upgrading on-prem infrastructure is another option, but supporting large GPU-accelerator platforms can require expensive redesigns in data center power and cooling.

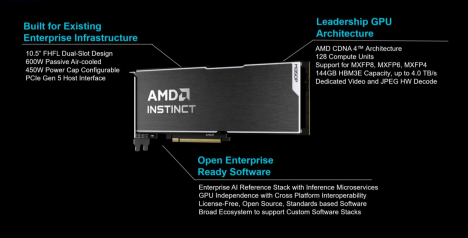

Our new AMD Instinct™ MI350 PCIe® cards give your enterprise a third option: Leadership AI performance designed to fit the data center infrastructure you already own.

Performance That Drops into Your Existing Racks

Designed to help you prepare for the agentic AI era, AMD Instinct MI350P PCIe cards are dual-slot drop-in cards for standard air-cooled servers. They are built to deploy inference on premises within your current data center’s power, cooling, and rack infrastructure. AMD Instinct GPUs in cost effective PCIe cards round out the AMD AI compute portfolio, providing a range of options for your enterprise as it navigates its unique AI adoption curve.

The PCIe card form factor is an excellent choice for enterprises that need more AI computing power than CPUs can provide but aren’t ready to invest in dedicated GPU accelerator platforms. Available in air-cooled systems with up to eight accelerator cards, AMD Instinct MI350P PCIe cards are ideal for small, medium and large AI models for inference and RAG pipelines.

Don’t Just Scale AI. Scale ROI.

AMD Instinct MI350P PCIe cards are engineered to deliver exceptional AI performance with excellent cost and leadership performance. Key features help increase performance, simplify deployment and reduce costs so you can move from evaluation to real outcomes:

- Native support for lower-precision MXFP6 and MXFP4, which deliver high throughput. • Acceleration through sparsity support for most mainstream 8- and 16-bit precisions.

•Estimated 2,299 teraflops (TFLOPS) and up to 4,600 peak TFLOPS at MXFP4, the highest performance currently available in an enterprise PCIe card.

Estimated 144GB of high bandwidth memory 3e (HBM3E) running at up to 4TB/s. • Open ecosystem with low- and no-cost development stack options simplifies deployment and helps lower operating expenses.

Enterprise AI Software – Develop with Your AI Stack. Your Way, Today.

We built AMD Instinct MI350P PCIe cards with open standards for cross-platform interoperability. It continues our strategy of enabling a fully open AI ecosystem and providing customer choice in enterprise environments.

The AMD enterprise AI stack serves as a foundational component, integrating seamlessly with a broad ecosystem of AI software and tools. It includes the Kubernetes GPU Operator for full life cycle management, cloud-native AMD Inference Microservices and native support for AI frameworks such as PyTorch. All this enables you to migrate inference workloads with minimal code changes.

AMD enterprise AI reference stack is open source and provided to our partners at no licensing cost. It offers greater code transparency and helps reduce operating expenses. When combined with AMD Instinct MI350P PCIe GPU cards and partner-delivered solutions, the stack enables organizations to get up and running quickly on-premises without ongoing per-token charges.

Native Acceleration for Enterprise AI Precision Levels

AMD Instinct MI350P PCIe cards support the spectrum of precision levels that enterprise AI models rely on most. While lower-precision MXFP6 and MXFP4 offer maximized performance in pure TFLOPS and efficient model implementations, higher precision formats, like INT8 and BF16, benefit from the sparsity support on the AMD Instinct MI350P GPU to deliver efficient performance. Regardless of the precision, AMD Instinct MI350P PCIe cards are designed to deliver maximum GPU throughput and reduced memory usage to help lower power and cooling demands.

Support for FP8, MXFP8 and MXFP4 is a major reason AMD Instinct MI350P PCIe cards can process today’s AI workloads within standard, air-cooled data centers.

Deploy Enterprise AI Where You are Today

With AMD Instinct MI350P PCIe cards, your enterprise can move quickly from bare-metal infrastructure to production-ready AI systems on a strong foundation. They enable you to migrate workloads without code rewrites, integrate with existing AI pipelines and scale with evolving workloads.

Adopting AI doesn’t mean rebuilding infrastructure from the ground up. With AMD Instinct MI350P PCIe cards, enterprises can run more models and serve more users within their existing data centers.

To learn more, visit https://www.amd.com/instinct.

What Customers Say About AMD Instinct MI350P PCIe GPUs

OEM Partners

“For enterprises serious about AI, on-premises infrastructure isn’t a compromise. It’s a competitive advantage delivering the control, security and predictable outcomes that matter most. Dell PowerEdge servers paired with the AMD Instinct MI350P PCIe card gives customers a direct path to that advantage. Together, Dell and AMD offer industry-leading AI compute power and performance so enterprises can stop evaluating and start innovating.”

David Schmidt

Vice President, Product Management, Dell Technologies

“HPE is committed to helping customers operationalize AI for their business. With AMD’s MI350p, we are expanding HPE ProLiant server options available to customers that will deliver AI performance, efficiency and enterprise-grade reliability.”

Krista Satterthwaite

Senior Vice President and General Manager, Compute, HPE

“Our work with AMD on Instinct MI350P reflects the growing need to tightly integrate AI compute with intelligent networking and security. MI350P creates new opportunities to deliver scalable, resilient AI infrastructures that connect data, models and users across the enterprise.” Jeremy Foster

General Manager and Senior Vice President, Cisco Compute

“We’re working with AMD to support AMD Instinct MI350P on Lenovo’s AI-optimized infrastructure, helping customers move AI from experimentation into production. Lenovo and AMD are partnering to give enterprises a powerful new option to deploy scalable, energy efficient AI systems tailored to real-world business outcomes.”

Scott Patti

Vice President, Infrastructure Solutions Group, Lenovo

Infrastructure Solutions Group

“Supermicro is collaborating with AMD to support AMD Instinct MI350P in our high-density, modular AI server platforms designed for rapid deployment at scale. AMD Instinct MI350P fits naturally into our building block architecture, enabling enterprises to accelerate AI adoption while optimizing performance, power and time to value.”

Vik Malyala

President and Managing Director EMEA; SVP, Technology & AI; Supermicro

SVP, Technology & AI

“We’re working alongside AMD to expand AMD Instinct MI350P support across our AI server portfolio, bringing open and scalable AI infrastructure to a broader set of customers. With its PCIe based design, AMD Instinct MI350P enables flexible deployment and seamless integration into systems, allowing enterprises to build high-performance AI environments with the flexibility and efficiency required to scale globally.”

Daniel Hou

General Manager, Giga Computing, Gigabyte

Software Partners

“As enterprises move beyond AI experiments, the real challenge is operationalizing the hardware at scale. Our collaboration with AMD, on AMD Instinct MI350P, reflects a shared commitment to an open, scalable AI stack. Pairing this with Red Hat AI platforms allows organizations to deploy and manage workloads across the hybrid cloud, helping them bridge the gap from experimentation to production-grade AI.”

Brian Stevens

Senior Vice President and AI Chief Technology Officer, Red Hat

“Our customers are building real-time, AI-driven applications that demand low latency, global scale, and security they can trust. The AMD Instinct™ MI350P’s footprint and memory profile make it a compelling option for distributed inference workloads, where bringing intelligence closer to users is essential. We’re encouraged by AMD’s continued innovation in accelerator design, and the role purpose-built inference silicon will play in enabling agentic AI at the edge.”

Shawn Michels

VP of Product – Cloud Computing, Akamai

“Through our partnership with AMD, VMware Cloud Foundation on AMD Instinct MI350P GPUs will bring the flexibility, resource-optimization and operational simplicity of virtualization to AI deployments. Organizations will be able to manage AI accelerators within their existing virtualized environments without data center redesigns, enabling them to scale AI workloads efficiently while lowering total cost of ownership through better infrastructure utilization.”

Paul Turner

Chief Product Officer, VCF Division, Broadcom

“We see MI350P as a key enabler for deploying enterprise-grade AI across customer experience and automation workflows. Working with AMD allows us to scale our AI solutions with the performance and efficiency customers need to drive real business impact.”

Tushar Shah

Chief Product Officer, Uniphore

“We’re working with AMD to support MI350P as part of our mission to bring secure, production ready AI orchestration to the enterprise. MI350P enables customers to scale AI workloads reliably, helping bridge the gap between experimentation and production.”

Luke Norris

Co-founder and CEO, Kamiwaza

“Our collaboration around MI350P reflects AI’s growing role in enterprise decision-making and trust. MI350P gives us the performance foundation to help customers deploy transparent, accountable AI systems at meaningful scale.”

Lloyd Cope

Chief Revenue Officer, Seekr

At Nutanix, we’re working closely with AMD on plans to support MI350P as part of an open, full-stack enterprise AI platform spanning infrastructure, Kubernetes, and hybrid cloud operations. MI350P enables customers to run AI inference and agentic workloads at scale with operational consistency, openness, and production-ready performance across hybrid environments

Ketan Shah

VP Product Management, Nutanix

This document contains preliminary performance estimates based on AMD engineering projections or early measurements as of April 2026 and are subject to change. GD-247a.